- AI Transformation

Our AI Team

Sofia

Ivan

Vlad

Anton

Technolody Stack

Our Clients

Featured Cases

- Services

Full Cycle Development

By Industry

- Works

Other projects

AI Learning PersonalizationSmart content recommendationsHotel AI ConciergeAI assistant for hotel guestsClaims Documentation AutomationPlatform for faster claims processingAI for Candidate ScreeningSmart HR efficiency boosterAI Voice AgentAI agent for hands-free learningLLM Legal SummarizationEfficient and fast legal summariesVision-Based Driving AssistanceReal-time threat detection system - Company

Yellow in Numbers

$2.1B+

Value generated through AI innovation47

Custom LLMs and AI agents deployed30M+

Engaging with products we created98%

Projects delivered within agreed budget

August 30, 2018

Why Use Kubernetes In Your Next Project

Seriously, what is so special about container orchestration tools? Let’s unpack Kubernetes and see what it has in store.

If you haven’t been living under a rock for several months now, you've probably heard about Kubernetes, aka Kube or K8s, one of the best container orchestration tools on the market. At first sight, Kubernetes might seem like rocket science compared to Docker Compose. However, as a result of putting slightly more effort into the initial setup, you get a scalable, flexible, and durable Cloud platform well-suited for microservices.

What is a container?

Simply put, a container is a package that contains an application code and all its dependencies needed to launch the app. Applications inside a container are isolated from the rest of the host system and always run in the same way, regardless of the environment.

Unlike virtual machines, containers don't have to run through the entire cycle of the operating system launch. That’s why they start and end work quicker and utilise disk, memory, and processor capacities more efficiently. Besides, you don’t have to keep in mind what language and frameworks were used to write an app as it is packaged into a container with everything it needs (runtime, libraries, etc.) to be safely migrated and deployed in any environment.

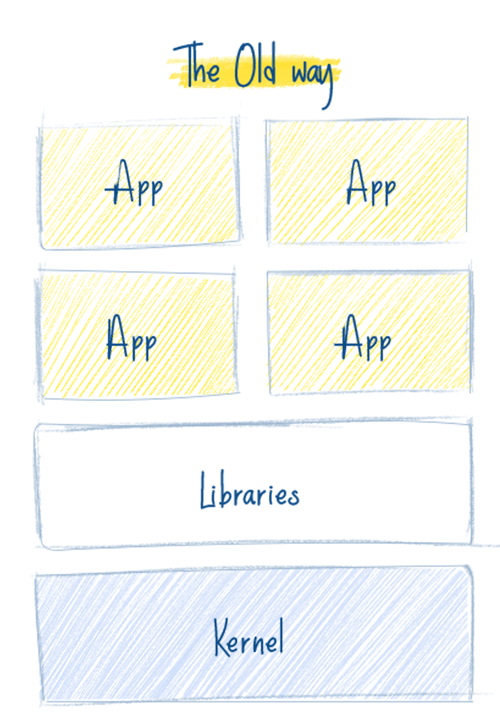

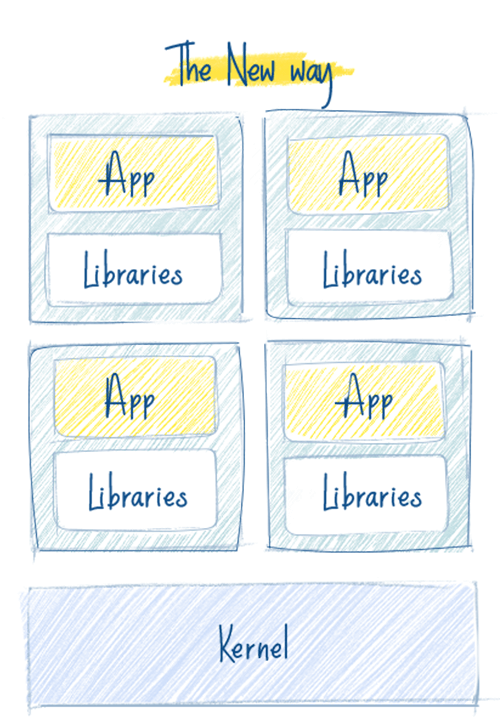

In a legacy model (on the left), apps are deployed directly on servers or virtual machines. Unlike them, apps in a containerized infrastructure are each packaged into a separate container, which means they will start quicker, scale smarter, and run smoother in any environment.

What is Kubernetes?

Kubernetes is an open source project for managing clusters of containerized applications as a unified system. Kubernetes manages the scalability, replication, and health of the groups of applications packaged into containers (also known as "pods") and solves the problem of launching and balancing the pods on a large number of hosts (nodes). The project was first released by Google and is now supported by many companies such as Microsoft, RedHat, IBM, and Docker.

When writing an app that will further be containerized, it’s better to have a microservices approach in mind from the very start. Otherwise, if the app is written monolith, it will be difficult to package it into a container in the future.

Let’s take a quick glance at some of the most notable advantages of Kubernetes in comparison to virtual machines (and if you've got any questions at this point, we'll be happy to help)

1. One platform to rule them all

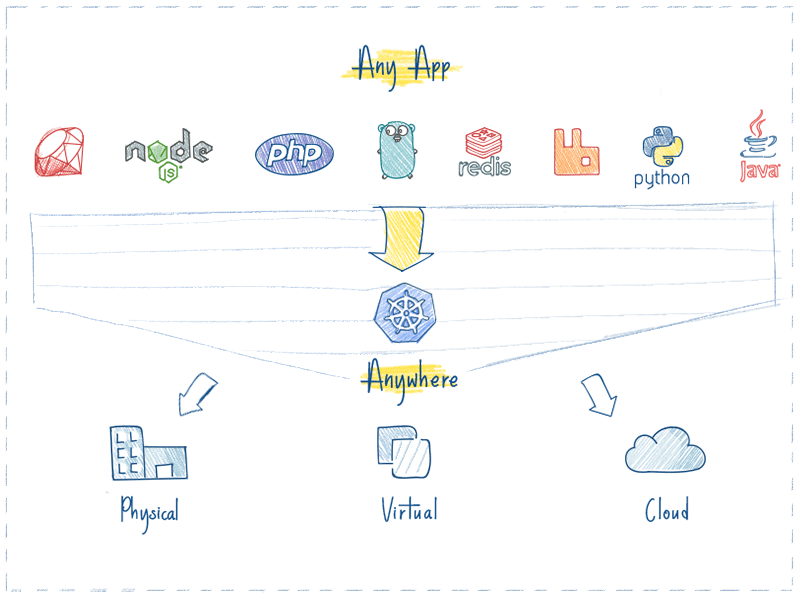

With Kubernetes, deployment of any application is as easy as pie. In general, if an app can be packaged into a container, Kubernetes is definitely able to launch it.

Whatever language or framework was used to write an app (Java, Python, Node.js, etc.), Kubernetes is able to safely launch it in any environment, be it a physical server, virtual machine, or Cloud.

2. Cloud agnosticism

Have you ever tried to migrate an infrastructure from, let’s say, GCP to AWS or Azure? With Kubernetes you don't have to worry about the characteristics of a Cloud services provider because it can work on any of them. Design your infrastructure once and run it anywhere. Cool, isn’t it?

Kubernetes is fully compatible with the major Cloud providers, such as Google Cloud, Amazon Web Services and Microsoft Azure. It also works well on CloudStack, OpenStack, OVirt, Photon, and VSphere.

3. High efficiency in utilizing resources

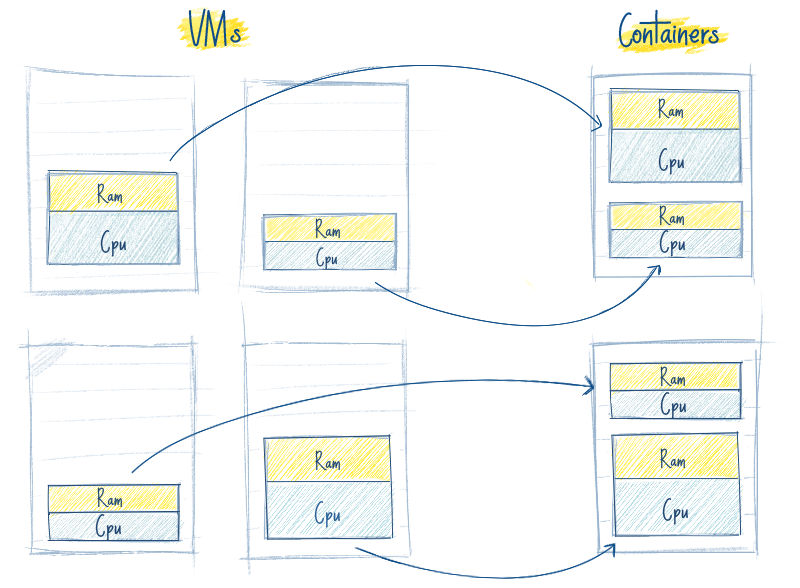

Look at the picture below. On the left, you see four virtual machines, where the running applications are colored in yellow and blue, while the white space is the unused memory and processor capacity. On the right, you see the same applications packaged into containers. Can you feel the difference?

Kubernetes helps save costs and utilize memory and processor resources efficiently

When Kubernetes sees that nodes don't work to their fullest capacity, it redistributes pods among them to shut the unused nodes down and stop wasting resources. Besides, if a node is down for a certain reason, Kubernetes will simply re-create the pods running on that node and launch them on another one so no data will be lost.

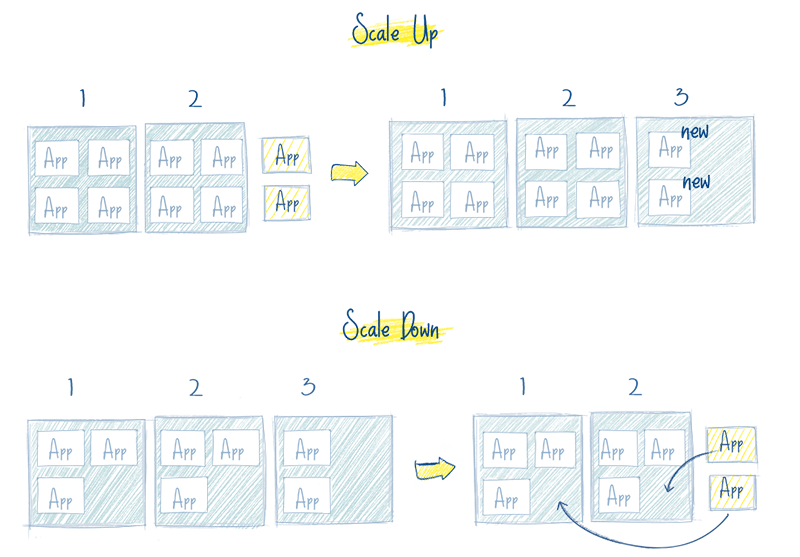

4. Out-of-the-box autoscaling

Features such as networking, load balancing, and replication are available on Kubernetes out-of-the-box. Pods run by Kubernetes are stateless by default; this means that if, at any moment in time, one of them is down, the end user won’t notice a thing on their side as their request will be immediately processed by another pod. The same thing happens when 200 users suddenly become 2,000—the necessary number of pods can automatically come into play right when they are needed. And vice versa—Kubernetes can automatically scale the number of pods down when the server load is decreasing.

Kubernetes boosts app scalability, making sure it won’t crash at a critical moment.

5. Simplified Continuous Integration and Deployment (CI/CD)

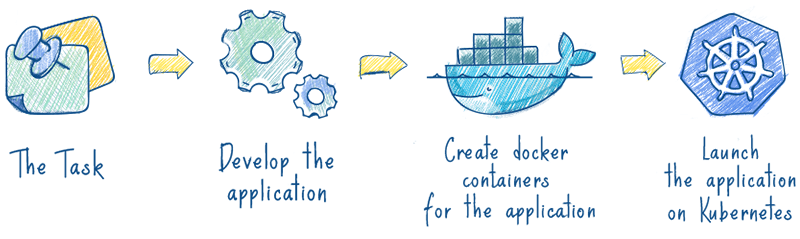

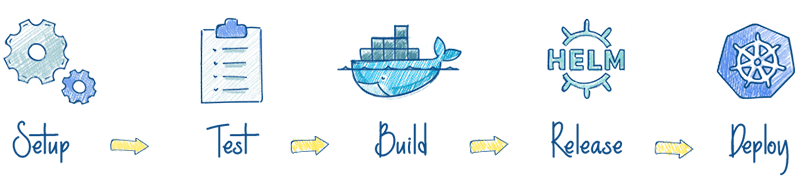

Kubernetes makes the CI/CD process less painful. You don’t have to be well-versed in Chef and Ansible—you just need to write a simple script and run it on any CI service. It will then create a new pod with your code and deploy it in your Kubernetes cluster. Besides, applications packaged into a container can be safely launched anywhere—from your personal PC to a cloud server—making them pretty easy to test.

Containerized apps go hand in hand with the CI/CD principles of continuous testing, scalability, and quick deployment.

6. Durability

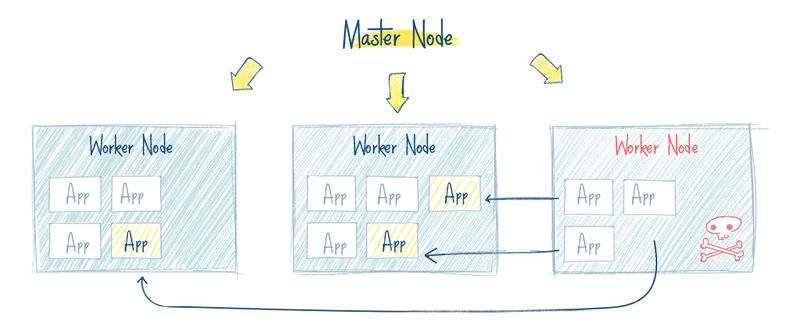

One of the reasons Kubernetes is so popular now is that the smooth operation of an application can’t be interrupted by the failure of a pod or even the entire node. In this case, Kubernetes will create the necessary number of application images and redistribute them to a healthy pod or node until the system recovers. You can rest assured that end users won’t notice anything on their side.

A containerized infrastructure is self-healing and can provide uninterrupted operation of an app, even if a part of the infrastructure is out of order.

Conclusion

Kubernetes makes launching, migrating, and deploying containerized apps easier and safer. So, don't worry that an app won’t work properly after migration or that a server won’t handle a sudden inflow of users.

The only thing you have to keep in mind is that an app should initially be developed using the microservices approach. Otherwise, if the app is monolith, it will be difficult to package it into a container. But this is just a tiny issue compared to all the possibilities you get instead, right?

Get a consultation from top developers

Get in touchGot a project in mind?

Fill in this form or send us an e-mail

Subscribe to new posts.

Get weekly updates on the newest design stories, case studies and tips right in your mailbox.